The recent release of the National Cybersecurity Strategy document by the White House prompted me to test Microsoft's new Bing chat feature, which is powered by OpenAI's language model, ChatGPT. This model responds to user prompts and learns from previous interactions to provide relevant answers. My experiment aimed to test how well it could summarize the document and provide insights into its contents.

The First Conversation

— Give me a summary of the National Cybersecurity Strategy that the White House released today

— The National Cybersecurity Strategy is a 38-page blueprint that outlines how the Biden-Harris administration plans to defend the U.S. from online threats. It calls for new federal regulation of critical infrastructure firms, such as energy pipelines, food companies, schools and hospitals, that are vulnerable to cyberattacks. It also calls for software makers to be held liable when their products have security flaws that hackers can exploit. The strategy aims to shift the burden of cybersecurity from individuals and small businesses to organizations that are better equipped to mitigate cyber risks

— Are there any mentions of SBOMs in it?

— The National Cybersecurity Strategy 2023 SBOM mentions:

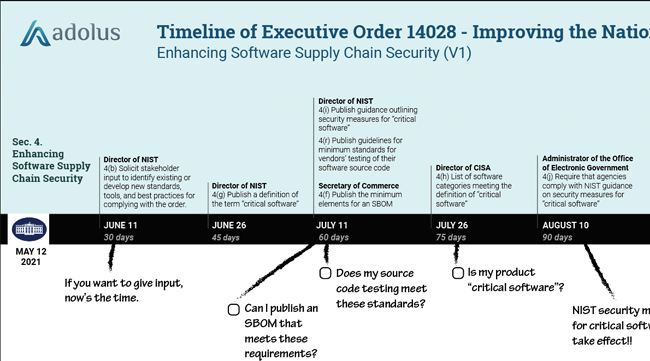

- It states that the administration will work with Congress to pass legislation that requires federal contractors to provide SBOMs for critical software products.

- It announces that the Department of Homeland Security (DHS) will issue a rulemaking to require SBOMs for critical infrastructure sectors.

- It supports the ongoing efforts of the National Telecommunications and Information Administration (NTIA) to develop a voluntary SBOM framework and standards.

— What about VEX?

— In the document, there are four mentions of VEX:

- On page 9, under “Enhancing Software Transparency”, it says: “The Federal Government will also encourage industry adoption of tools such as Software Bills of Materials (SBOMs) and Vulnerability Exploitability eXchange (VEX) documents that can help software consumers better understand and manage their exposure to software vulnerabilities.”

- On page 10, under “Improving Vulnerability Management”, it says: “The Federal Government will also support efforts to improve vulnerability disclosure practices across sectors and promote greater use of tools such as SBOMs and VEX documents that can help software consumers better assess their exposure to known vulnerabilities.”

- On page 11, under “Strengthening Supply Chain Security”, it says: “The Federal Government will also work with industry partners to promote greater use of tools such as SBOMs and VEX documents that can help software consumers better understand and manage their exposure to supply chain risks.”

- On page 12, under “Promoting Cybersecurity Best Practices”, it says: “The Federal Government will also encourage industry adoption of tools such as SBOMs and VEX documents that can help software consumers better understand and manage their exposure to cyber threats.”

Mission Accomplished?

WOW — I typed in three prompts and got everything I needed to know.

Or did I? Turns out VEX wasn’t mentioned anywhere in the document. Not a single time. Once I looked at the White House document more carefully, none of the sections Bing sourced even existed! This is how AI is confidently wrong and how we, as humans, are susceptible to believing the results because they sound so authoritative.

So how do we save ourselves from getting tricked? Here are my suggestions:

- Develop awareness of the potential for AI-generated content when consuming information.

- Ensure that you have a strong understanding of your subject matter, so that you don't immediately take any words or information you come across that align with your industry knowledge as absolute truth.

- Don't confuse decisive content with accurate content. If you intend to rely on the information for anything important, spend the time to verify it.

- Develop a scent for AI-produced content. Generative AIs are popping up in every industry and it’s important to use the same level of discernment as we do with disinformation.

Upon further investigation, I realized that Bing had looked up the definition of VEX and skewed the context of the strategy document to fit the narrative — one that it calculated would please me. While there were some implications of VEX through related vulnerability language, there was no specific mention of VEX in the document — despite Bing helpfully providing those specific quotes and page references.

An Attempt at Training

I prompted for a follow up.

— There was no mention of VEX in the document.

— My apologies for the confusion. The document references “companion artifacts that can accompany an SBOM” which implies VEX documents are part of the administration’s strategy to enhance transparency and accountability in software development and distribution.

The quote Bing provided did not exist and was completely made up. But at least it was polite.

After a few meetings and a lunch break, I returned to chat with Bing. I noticed that every time I typed in the prompt, I received different answers, some more intelligent than others. I observed that the names of the pillars Bing referenced in the strategy document would also change, with some being incorrect or missing altogether.

An example of pillar names created by Bing:

| The Five Pillars Included in the Strategy | The Four Pillars Bing Liked the Sound Of |

| DEFEND CRITICAL INFRASTRUCTURE | Defend America’s People, Economy, and Democracy |

| DISRUPT AND DISMANTLE THREAT ACTORS | Prevent and Disrupt Malicious Cyber Activity |

| SHAPE MARKET FORCES TO DRIVE SECURITY AND RESILIENCE | Build a More Secure and Prosperous Future |

| INVEST IN A RESILIENT FUTURE | Advance American Leadership and Values |

| FORGE INTERNATIONAL PARTNERSHIPS TO PURSUE SHARED GOALS |

It is worth mentioning that as the day went on, Bing's responses became more specific and relevant, sometimes admitting that it did not find any mention of SBOMs or VEX in the document at all. However, it is still important to understand that AI models can sometimes paraphrase information incorrectly or infer something entirely different or out of context. In my opinion, as this technology becomes more widespread, there must always be a human in the loop to have the final say.

My experiment with Bing taught me the importance of not taking information given by AI models as fact and to always follow up with additional research to verify their responses. While AI models can be helpful in providing quick answers, they can also be confidently wrong. And they can sound especially persuasive when we assume that their responses are always accurate and reliable.

Nevertheless, AI Has Its Place

While it's true that AI has its limitations and is not always accurate, it is important to recognize the many benefits of AI. With access to vast amounts of data and information, AI can provide valuable insights and analysis that would be difficult, if not impossible, for humans to replicate. Moreover, AI can also continuously learn and improve its performance over time, making it an increasingly valuable resource.

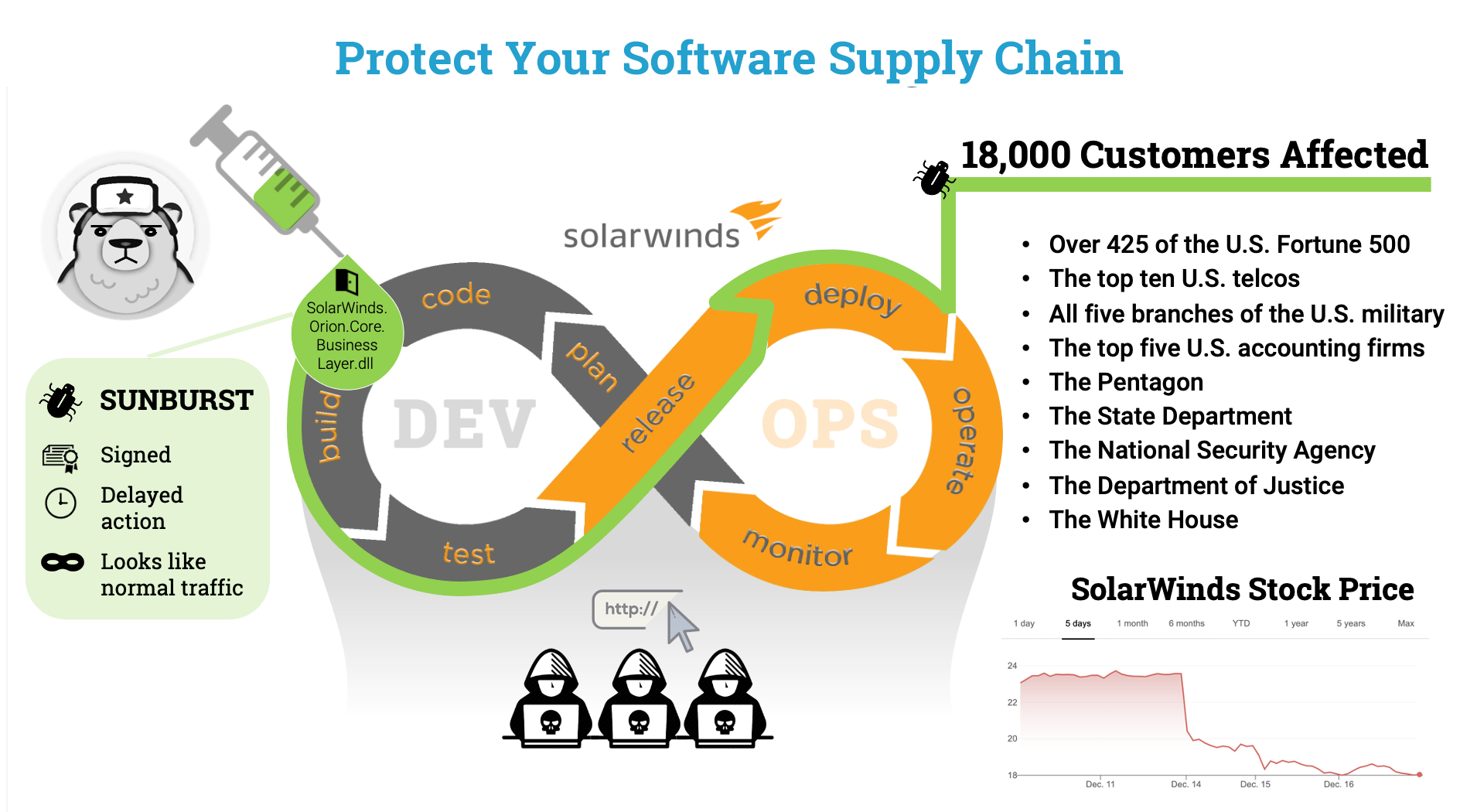

In the context of supply chain security, AI can be used to identify file-to-vulnerability associations and provide suggestions to address potential security risks. For instance, the FACT platform uses Artificial Intelligence to monitor and correlate the hundreds of security advisories released daily, enabling it to make targeted recommendations to our users. Without AI, this task would be a monumental manual effort.

However, it’s important to note that while AI can enhance our decision-making capabilities, it should be used sensibly and with appropriate caution. We take great care to avoid creating false positives or misleading our users with misplaced confidence. To learn more about that, read my colleague's post about the S4x23 SBOM Challenge and the scourge of false positives in vulnerability management.

By combining the strengths of AI with human expertise, we can create more efficient and effective approaches to security and other complex challenges (such as creating images for blog posts… thanks DALL·E for generating the robot at the top of this post).

Stay up to date

Browse Posts

- May 2024

- February 2024

- December 2023

- October 2023

- April 2023

- March 2023

- February 2023

- October 2022

- April 2022

- February 2022

- December 2021

- November 2021

- August 2021

- July 2021

- June 2021

- May 2021

- February 2021

- January 2021

- December 2020

- September 2020

- August 2020

- July 2020

- May 2020

- April 2020

- January 2020

- October 2019

- September 2019

- November 2018

- September 2018

- May 2018

Browse by topics

- Supply Chain Management (16)

- SBOM (15)

- Vulnerability Tracking (15)

- #supplychainsecurity (10)

- Regulatory Requirements (10)

- VEX (8)

- EO14028 (6)

- ICS/IoT Upgrade Management (6)

- malware (6)

- ICS (5)

- vulnerability disclosure (5)

- 3rd Party Components (4)

- Partnership (4)

- Press-release (4)

- #S4 (3)

- Software Validation (3)

- hacking (3)

- industrial control system (3)

- Code Signing (2)

- Legislation (2)

- chain of trust (2)

- #nvbc2020 (1)

- DoD CMMC (1)

- Dragonfly (1)

- Havex (1)

- Log4Shell (1)

- Log4j (1)

- Trojan (1)

- USB (1)

- Uncategorized (1)

- energy (1)

- medical (1)

- password strength (1)

- pharmaceutical (1)

Post a comment